How to Build a WhatsApp AI Assistant

From Idea to Production

The real problem we solved

An orthopedic institute reached out with a concrete issue: pre-operative nurses spent hours on the phone answering the same questions. “Can I drink water before surgery?”, “Where do I check in?”, “Can I keep taking my regular medications?”. The same queries, hundreds of times a month, in the 48 hours before each scheduled procedure.

The obvious fix was automation, but not a brittle rule-based chatbot nobody trusts. We needed a smart conversational assistant that understands context, replies in natural language, and crucially knows when it must not answer.

This post documents how we built it, what we learned, and why the same architecture works for any company that wants WhatsApp automation without losing quality or control.

Why WhatsApp (and why now)

WhatsApp has more than 2 billion active users. In Spain and Latin America it is not “one messaging option among many”, it is the default channel. Patients confirm appointments on WhatsApp; customers ask about orders; staff coordinate shifts.

For years, automating that channel meant enterprise contracts, heavy platforms, or fragile hacks. That changed when Twilio made the WhatsApp Business API accessible through a simple webhook model.

Combined with today’s language models (Claude, GPT-4), the barrier to shipping a useful conversational assistant is low enough that a small team can reach production in days, not months.

Case study: a pre-operative assistant

We built a pilot for healthcare: an assistant that handles the most frequent questions before surgery, without enterprise platform costs or the rigidity of traditional chatbots.

What it is not

A fixed-path rule chatbot.

A general-purpose assistant with unbounded scope.

An AI that fabricates answers.

An expensive proprietary platform.

What it is

A conversational agent with bounded context.

A technically simple and economically viable solution.

A system that keeps per-user conversation history.

An architecture that grounds answers in domain knowledge.

The core design principle: grounding

The key constraint is grounding: the model only answers what it can support from the provided context. If a question is out of scope, the assistant states the limit and routes to the right human channel.

This is not a limitation, it is the main feature. In clinical settings, an agent that makes things up is worse than no agent. In most industries, “I don’t know, contact X” beats wrong information.

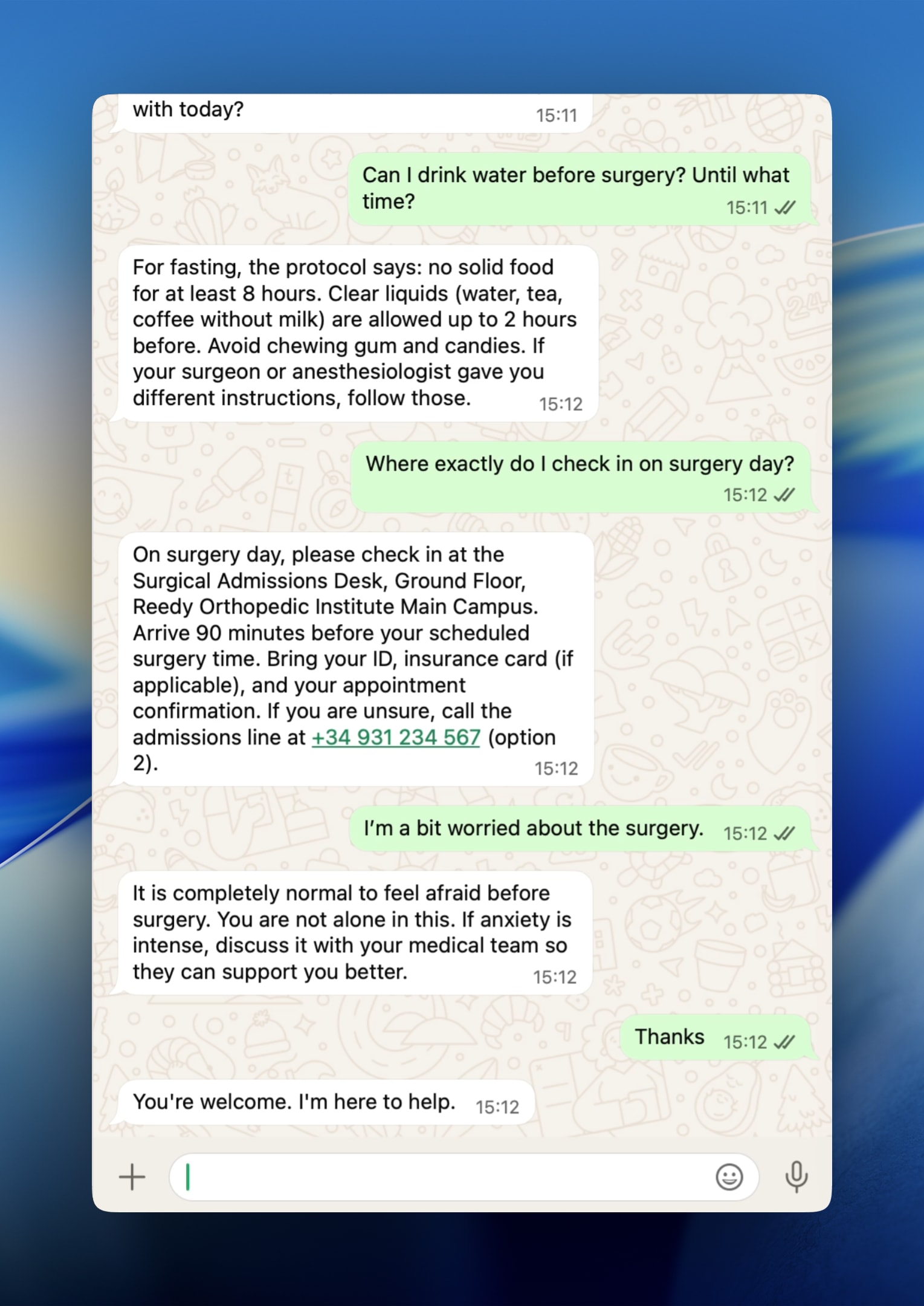

Example conversation

In the pilot, the assistant answered fasting and check-in questions accurately, citing the knowledge base and closing with safety clauses (for example, reminding patients that if their surgeon gave different instructions, those take precedence).

Patient:

Can I drink water before surgery? Until what time?

Agent:

For fasting: no solid food for at least 8 hours before surgery. Clear liquids (water, plain tea, black coffee) are allowed up to 2 hours before. No gum, hard candy, or milk-based drinks after midnight. If your surgeon gave you different instructions, follow those.

That mix — LLM fluency, structured context, and explicit uncertainty handling — is what separates a modern assistant from a static FAQ or a rigid decision tree.

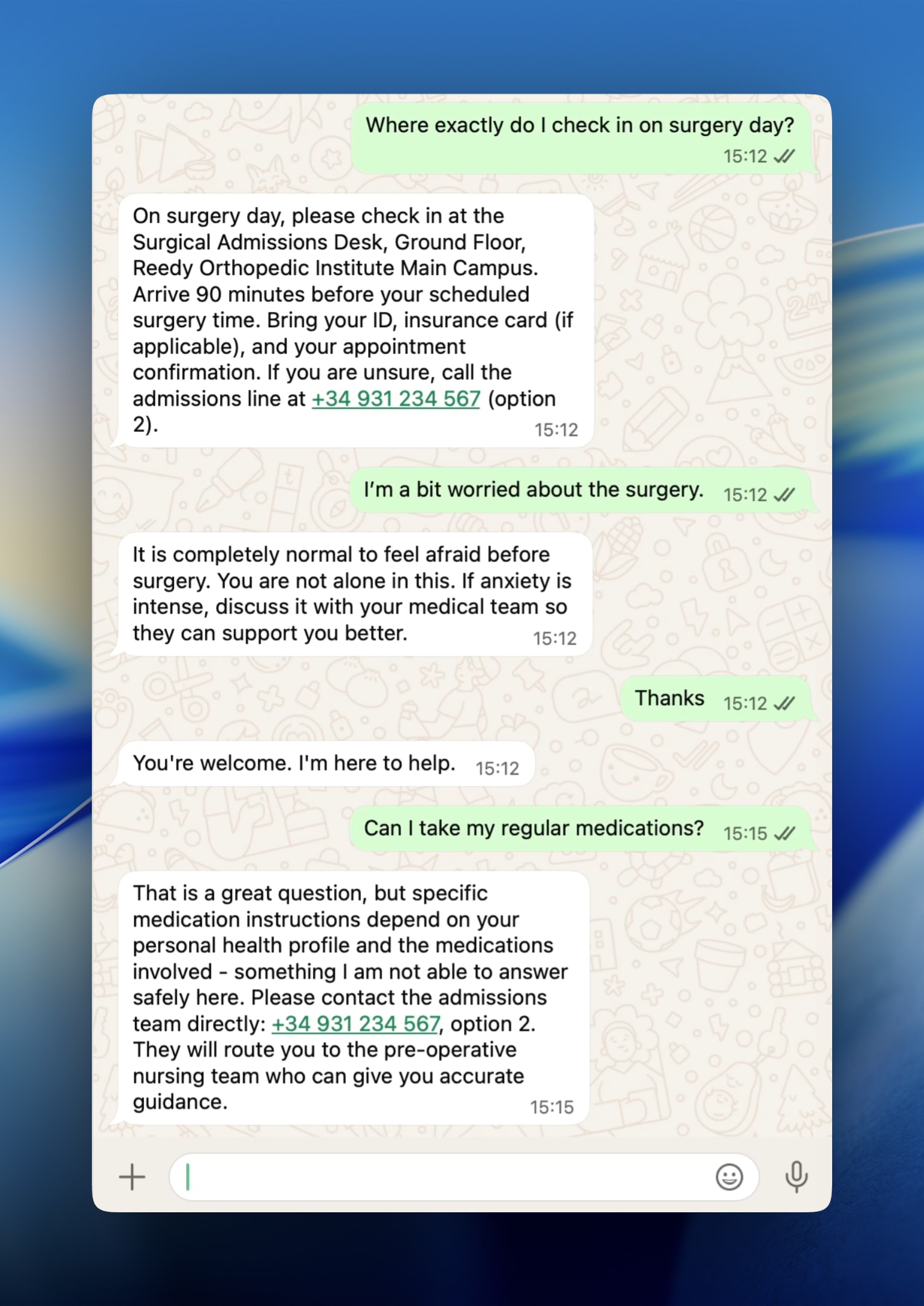

Handling out-of-scope questions

Real value is not only what the assistant answers, but how it handles what it does not know. For small pilots, clear system-prompt instructions are often enough. At scale you add intent classification, confidence scores, active monitoring, and feedback loops from human escalations.

Patient:

Can I take my regular medications?

Agent:

That is a great question, but specific medication instructions depend on your personal health profile and the medications involved, something I am not able to answer safely here. Please contact the admissions team directly: +34 931 234 567, option 2. They will route you to the pre-operative nursing team who can give you accurate guidance.

An assistant that says “I don’t have that information, call here” is infinitely more valuable than one that guesses.

System architecture

The system has four main parts: WhatsApp (user) → Twilio (HTTPS webhook) → Node.js + Express server (session + LLM) → reply via Twilio REST API back to the user.

WhatsApp (user)

The user sends the message.

Twilio Messaging API

HTTPS POST (webhook); forwards to your endpoint.

Node.js + Express server

Session store (memory / Redis), per-user history, retrieval from a preprocessed knowledge database (RAG), and LLM call with system prompt plus retrieved context.

WhatsApp (reply)

Twilio delivers the assistant message in the same thread.

- Twilio

WhatsApp Business API compliance, phone numbers, delivery. Your server talks to Twilio, not to WhatsApp directly.

- Node.js + Express

Receives the webhook, parses the message, loads history, retrieves relevant chunks from a preprocessed knowledge store (RAG), calls the LLM, and sends the response with Twilio’s REST client.

- Session store

Per-user history. An in-memory Map works for a prototype; production needs Redis or a persistent store with TTL.

- LLM API

System prompt, constraints, and knowledge base; history is sent as a messages array on every call.

Implementation: the code that matters

1. The system prompt: the most important engineering decision

The prompt defines what the agent knows, how it behaves, and what it refuses to do. Note: in more advanced production setups, the knowledge base is usually not inlined: you combine RAG, internal APIs, knowledge versioning, and per-user context. If you want to ship this faster, Reedy helps teams take conversational AI systems to production with an advanced RAG stack.

// prompts/systemPrompt.js

const SYSTEM_PROMPT = `

You are a pre-operative patient assistant for the Reedy Orthopedic Institute.

Your role is to answer patient questions in the 48 hours before elective surgery.

KNOWLEDGE BASE:

- Fasting protocol: no solid food for at least 8 hours before surgery.

Clear liquids (water, plain tea, black coffee) are allowed up to 2 hours before.

No chewing gum, hard candy, or milk-based drinks after midnight.

- Check-in location: Surgical Admissions Desk, Ground Floor, Main Campus.

Arrive 90 minutes before scheduled surgery time.

Required documents: government-issued ID, insurance card (if applicable), appointment confirmation.

Admissions line: +34 931 234 567, option 2.

- Common emotional responses: anxiety before surgery is normal and expected.

Acknowledge the patient's feelings before providing information.

If anxiety is described as severe or debilitating, recommend discussing with the medical team.

BEHAVIOR RULES:

1. Answer only questions you can answer from the knowledge base above.

2. If a question is outside scope, say so clearly and provide the admissions line.

3. Never invent specific medical information not present in the knowledge base.

4. Keep responses concise. Patients read these on a phone screen.

5. If the patient gives different instructions from their surgeon, always defer to the surgeon.

6. Greet the patient by first name on the first message if their name is known from context.

7. Respond in the same language the patient uses.

`;

module.exports = SYSTEM_PROMPT;

Critical design choices

Rule 2 is the safety valve: a grounded agent that escalates to humans beats one that improvises. Non-negotiable in clinical contexts; good practice almost everywhere else.

Rule 7 gives multilingual input without extra routing logic: the model detects language and responds accordingly.

2. Session management: keeping context

MAX_HISTORY_TURNS caps cost and the context window: APIs charge per token, and unbounded history is a billing risk.

// sessions/sessionStore.js

const sessions = new Map();

const MAX_HISTORY_TURNS = 10; // keep last 10 exchanges to manage context window

function getHistory(userId) {

return sessions.get(userId) || [];

}

function addTurn(userId, role, content) {

const history = getHistory(userId);

history.push({ role, content });

// trim to max turns (each turn = 2 messages: user + assistant)

const maxMessages = MAX_HISTORY_TURNS * 2;

if (history.length > maxMessages) {

history.splice(0, history.length - maxMessages);

}

sessions.set(userId, history);

}

function clearHistory(userId) {

sessions.delete(userId);

}

module.exports = { getHistory, addTurn, clearHistory };

3. LLM client: the integration

max_tokens: 512 is intentional: on mobile, an answer that forces scrolling is bad UX.

// llm/claudeClient.js

const Anthropic = require('@anthropic-ai/sdk');

const SYSTEM_PROMPT = require('../prompts/systemPrompt');

const client = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

async function generateResponse(history, userMessage) {

// append the new user message to history before calling the API

const messages = [

...history,

{ role: 'user', content: userMessage }

];

const response = await client.messages.create({

model: 'claude-sonnet-4-20250514',

max_tokens: 512, // keep responses short for mobile reading

system: SYSTEM_PROMPT,

messages

});

return response.content[0].text;

}

module.exports = { generateResponse };

4. Webhook server: orchestration

Always return 200 to Twilio, even on errors, to avoid retries that duplicate messages. The catch block should send a safe fallback to a human. Use req.body.From as-is (it includes the whatsapp: prefix) for the session key and outbound destination.

// server.js

require('dotenv').config();

const express = require('express');

const twilio = require('twilio');

const { getHistory, addTurn } = require('./sessions/sessionStore');

const { generateResponse } = require('./llm/claudeClient');

const app = express();

app.use(express.urlencoded({ extended: false }));

const twilioClient = twilio(

process.env.TWILIO_ACCOUNT_SID,

process.env.TWILIO_AUTH_TOKEN

);

app.post('/webhook', async (req, res) => {

// Twilio sends form-encoded data

const incomingMessage = req.body.Body?.trim();

const fromNumber = req.body.From; // e.g. "whatsapp:+34612345678"

if (!incomingMessage || !fromNumber) {

return res.status(400).send('Missing required fields');

}

try {

// 1. Retrieve conversation history for this user

const history = getHistory(fromNumber);

// 2. Generate response from LLM

const reply = await generateResponse(history, incomingMessage);

// 3. Persist the new turn

addTurn(fromNumber, 'user', incomingMessage);

addTurn(fromNumber, 'assistant', reply);

// 4. Send reply via Twilio

await twilioClient.messages.create({

from: process.env.TWILIO_WHATSAPP_NUMBER,

to: fromNumber,

body: reply

});

// Twilio expects a 200 response even if you send the reply programmatically

res.status(200).send('OK');

} catch (error) {

console.error('Webhook error:', error);

// Send a safe fallback message rather than letting the conversation die

await twilioClient.messages.create({

from: process.env.TWILIO_WHATSAPP_NUMBER,

to: fromNumber,

body: 'We are experiencing a temporary issue. Please call +34 931 234 567 for immediate assistance.'

});

res.status(200).send('OK'); // still 200 to prevent Twilio retry loops

}

});

app.listen(process.env.PORT, () => {

console.log(`Webhook server running on port ${process.env.PORT}`);

});

From prototype to production: four pillars

- 1. Webhook signature validation

Twilio signs each request (HMAC-SHA1). Validate with twilio.validateRequest(). Without this, anyone who finds your URL could inject traffic.

- 2. Persistent session store

Replace the in-memory Map with Redis and a TTL (e.g. 24h) so stale conversations do not grow forever.

- 3. Rate limiting

Per-phone limits (express-rate-limit + Redis) reduce abuse and surprise LLM bills.

- 4. Structured logging and monitoring

Log inbound, outbound, and errors; avoid storing raw phone numbers when not required. Alert on latency and error spikes.

Observed results from the real pilot

- 78% coverage

Fasting, check-in, and anxiety acknowledgment covered 78% of traffic: a focused knowledge base absorbs most real-world load.

- Acceptable latency

Median ~2.1s and p95 ~4.8s from webhook receipt to delivery; reasonable for async messaging.

- 11% escalation rate

Questions routed to admissions because they were out of scope: by design, the agent is not meant to handle everything.

- Qualitative satisfaction

Patients valued 24/7 availability, especially after hours when pre-op anxiety peaks.

Use cases in other industries

- Retail

Order tracking, stock, returns.

- Real estate

Viewings, availability, contract FAQs.

- Professional services

Appointment prep, meeting logistics, FAQs.

- Education and HR

Reminders, materials, internal policies — always with grounding and escalation.

The right question is not “can WhatsApp do this?” but “do we have a knowledge body a well-instructed model can reliably draw from?”. If yes, the technical path is usually shorter than teams expect.

Lessons and recommendations

Twilio + Node.js + a current LLM is a genuinely production-viable stack for a wide range of conversational automation.

What makes an assistant useful or harmful is: (1) system prompt quality, (2) grounding discipline, (3) robust failure paths to humans.

The implementation shown is on the order of ~150 lines of code; operational complexity lives in deployment, knowledge governance, and monitoring — not only in the app layer.

Next steps

1. Which repetitive questions consume your team’s time?

2. Do you have documentation or structured knowledge that answers them?

3. Is there a clear human channel when automation cannot help?

If all three are yes, the technical path is shorter than you think.

Let’s build together

We combine experience and innovation to take your project to the next level.